Smarter, Faster, More Precise: The Next Generation of vviinn Models

In the fast-moving world of AI, "state-of-the-art" is a temporary title. At vviinn, we have always been pioneers in AI-powered search, but we recently took a step back to fundamentally rethink our core architecture.

In the fast-moving world of AI, “state-of-the-art” is a temporary title. At vviinn, we have always been pioneers in AI-powered search, but we recently took a step back to fundamentally rethink our core architecture. The result is a new generation of models, led by Berry Punch and Darjeeling 2nd Flush that bridge the gap between academic benchmarks and the gritty reality of e-commerce.

The Legacy: Where We Started

When we first built our search engine, we relied on the first waves of CLIP-based models. These were revolutionary at the time, trained on massive, general datasets to understand the relationship between images and text.

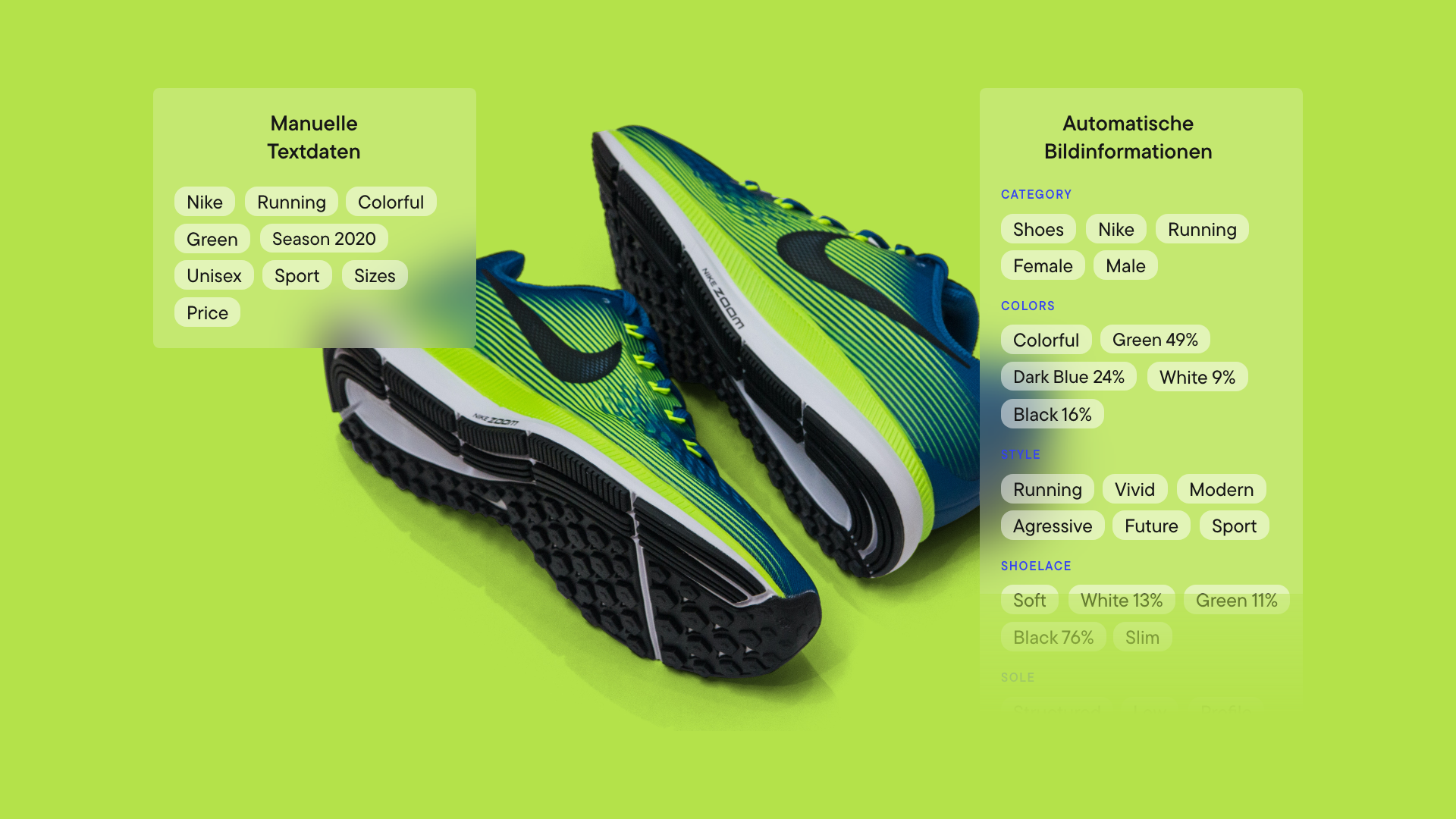

However, these early models were “generalists.” They operated on broad semantic assumptions perfect for describing a generic photo but often too vague for high-precision shopping. As my recent research highlighted, standard semantic supervision can blur the distinction between visually different products that share similar descriptions.

For example, a minimalist oak dining chair and a rustic oak dining table might both be semantically tagged as wooden dining furniture. In a standard search model, looking for the chair might incorrectly return the table because they share the same “semantic vibe.” In e-commerce, this is a failed interaction. A user looking for a chair does not want to sit on a table.

We realized that for e-commerce, semantic similarity is not enough. We needed visual precision.

Enter “Berry Punch”: The Multimodal Powerhouse

Our answer to this challenge is Berry Punch, our newest multimodal model. It is the direct application of the MCIP (Multi-Caption Image Pairing) method detailed in our latest research paper.

Unlike standard models that often force a trade-off—sacrificing text understanding to gain better image retrieval—Berry Punch uses a novel fine-tuning strategy to master both simultaneously.

Visual Precision via MCIP: The core innovation of MCIP is generating pseudo-captions during training. This allows us to fine-tune the CLIP image encoder specifically for “visual” rather than just “semantic” image-to-image search. The result is a model that understands fine-grained details like texture, shape, and material.

One Vector, Dual Power: A critical efficiency breakthrough of MCIP is that it allows us to store only one vector per product image. While competitor solutions often require storing separate vectors for visual search and text search (doubling storage costs), our architecture maintains a single, aligned joint-embedding space. This keeps our infrastructure lean without sacrificing quality.

German Native: Beyond the architecture, we fine-tuned Berry Punch specifically on e-commerce datasets and enhanced its German text-to-image capabilities.

Smarter Recommendations & “Shop the Look”

This technical leap translates into two distinct user benefits:

High-Precision Visual Recommendations: This is crucial for the “You May Also Like” section. If a user is viewing a specific patterned summer dress, Berry Punch doesn’t just show “other dresses.” It performs an image-to-image search with extreme precision, finding items with the exact same cut or print pattern, significantly increasing conversion probability.

Smarter “Shop the Look”: Users can upload a photo of a cluttered living room, and the AI will accurately identify and find the specific sofa or lamp, even if it’s partially hidden or in a complex scene.

“Darjeeling 2nd Flush”: Deep Text Understanding & Parallel Intelligence

While Berry Punch covers the visual layer, our text specialist Darjeeling ‘Second Flush’ elevates language understanding to a new level. It is trained to capture complex product descriptions and subtle attribute nuances with a depth that purely multimodal models often fail to achieve.

In our new architecture, every query triggers a parallel search: Berry Punch scans product images for visual matches, while Darjeeling simultaneously digs through product texts—analyzing titles, materials, and descriptions. These two result streams are then merged and curated into a single list. This ensures that whether the relevant clue is hidden in a pixel pattern or a specific keyword in the description, the system finds exactly what the user needs.

Bigger Is Not Always Smarter

There is currently a trend toward massive Visual Large Language Models (VLLMs). While impressive, these models are computationally heavy and often slow.

Our research shows that “bigger” does not always mean “better” for search tasks. Berry Punch outperforms many larger generalist models in visual query tasks while being 10-50x faster. By focusing on specialized, efficient architectures rather than raw parameter count, we deliver superior results without the latency that kills e-commerce conversion rates.

The “Secret Sauce”: Quantization & Efficiency

To further enhance this speed, we implemented advanced dimensionality reduction and byte-quantization. This optimizes memory load and retrieval speed, allowing us to store massive product catalogs in high-speed memory without the bloat usually associated with high-dimensional vector search. The result? A single joint-embedding space that is fast, lean, and incredibly accurate.

Proven Quality

The performance of these models isn’t just theoretical. They not only achieve state-of-the-art results on open benchmarks, but they also demonstrated superior quality in our internal benchmarking on manually created, product-specific test sets compared to our older generation.

We view model evolution not as a destination, but as a continuous process. As “Agentic Commerce” and automated buying become reality, the need for data that is machine-readable and visually precise will only grow. We are proud to roll out Berry Punch and Darjeeling 2nd Flush—making our search smarter, faster, and more intuitive than ever.

Want to dive deeper into the math behind Berry Punch?

You can read the full breakdown of the MCIP architecture in my latest paper: A comprehensive approach to improving CLIP-based image retrieval while maintaining joint-embedding alignment

Related posts

Robotics, Retail, and the Rise of Agentic Commerce